attempto online - Research

23.08.2024

University of Tübingen and Google DeepMind Accelerate Neural Network Training Through Open-Source Competition

New Methods Significantly Cut Neural Network Training Time, Boosting AI Efficiency

Researchers from the Tübingen AI Center at the University of Tübingen, in partnership with Google DeepMind and other academic and industry labs, have carried out a competition that found new algorithms which greatly speed up the training of neural networks. These innovations could reduce training time by up to one-third, marking a major advancement in machine learning.

AlgoPerf: A Public Competition to Find the Best Training Algorithms

As part of their mission to enhance artificial intelligence, the University of Tübingen, Google DeepMind and collaborators launched a public benchmark competition called AlgoPerf: Training Algorithms Benchmark. This open-source competition, managed by the MLCommons Algorithms Working Group, sought to find the most efficient mathematical methods (called algorithms) to significantly shorten the time it takes to train neural networks.

The competition's rules ensured that participants could only modify the training methods, not the hardware or software used, making sure that faster training was achieved through better algorithms rather than more powerful computers. Google provided the necessary computing resources for the competition, and the results are available to everyone under an Apache 2.0 license.

Global Participation from Leading AI Institutions

The competition drew 18 submissions from 10 teams representing top AI research institutions worldwide, including ELLIS Institute Tübingen, Max Planck Institute for Intelligent Systems, UCLA, the University of Cambridge, Meta AI, Samsung AI and the Vector Institute.

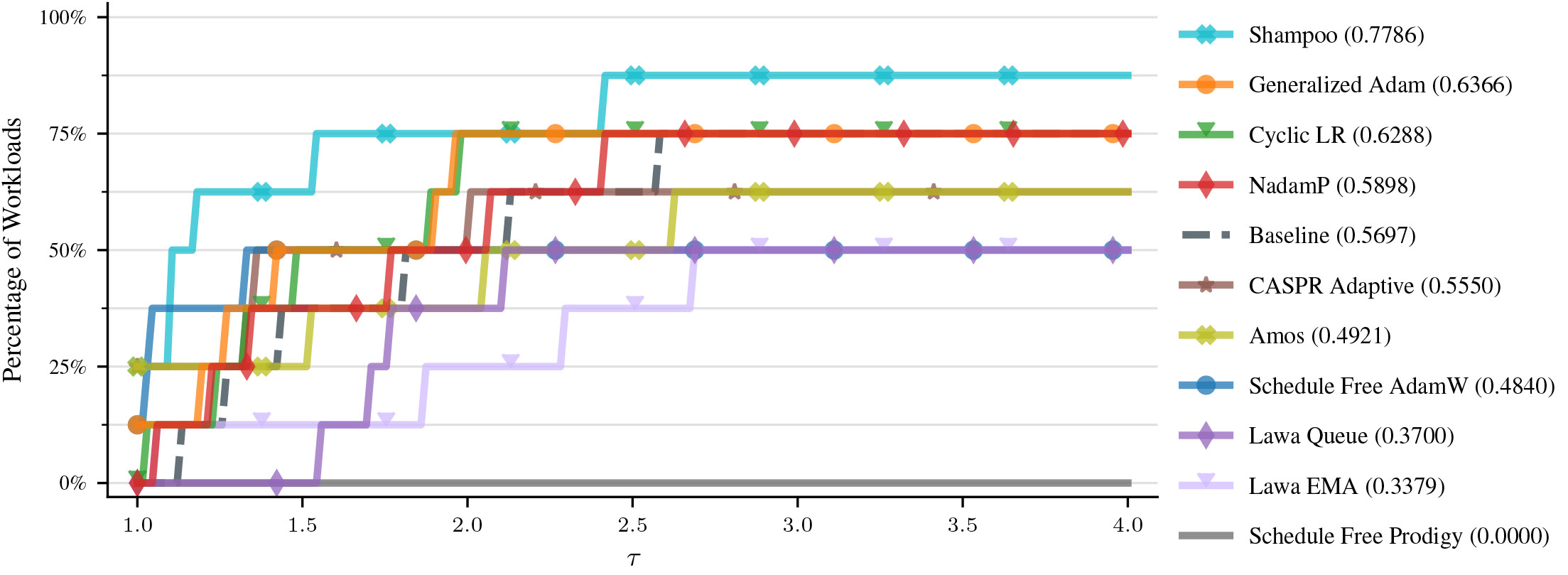

Participants were tasked with creating algorithms that could speed up neural network training for various real-world tasks. Some algorithms delivered impressive results, improving training speeds by as much as 28% compared to current state-of-the-art methods.

Impact of the AlgoPerf Competition

Frank Schneider, AI researcher at the Tübingen AI Center and co-chair of the MLCommons Algorithms Working Group together with George Dahl from Google DeepMind, emphasized the importance of these advancements: "By cutting training time by almost one third, we're not just making neural networks more efficient, thereby making AI applications like Large Language Models more sustainable; we're also making the technology more accessible and scalable across different fields."

This unusual collaboration of otherwise competing academic and industry labs highlights the University of Tübingen's dedication to pushing the boundaries of machine learning research and fostering innovation through strategic partnerships. The AlgoPerf competition is expected to set new standards in neural network training and inspire further research into algorithmic efficiency. It raises an exciting possibility: what if the top algorithms from the competition could be combined to make training even faster?

For more details on the competition and its outcomes, visit the MLCommons website: MLCommons AlgoPerf Competition. You can also access the related research paper here: arXiv Preprint.

Claudia Brusdeylins, Tübingen AI Center

About the Tübingen AI Center

The Tübingen AI Center is a leading AI research institute at the University of Tübingen in collaboration with the Max Planck Institute for Intelligent Systems (MPI-IS). The Center’s goal is to advance robust learning systems for the benefit of society and the economy. Its 250 scientists, organized into 20 research groups, contribute to the development of socially valuable technologies as part of the “AI made in Europe” initiative. The Center also works closely with the ELLIS Institute Tübingen and Cyber Valley and is supported by the Ministry of Science, Research, and Arts of Baden-Württemberg and the German Federal Ministry of Education and Research.

About MLCommons

MLCommons is a global collaboration involving over 125 founding members and affiliates, including startups, leading companies, academic institutions, and non-profits. The organization aims to democratize AI through open industry-standard benchmarks that measure quality and performance, as well as by creating large-scale, diverse datasets to improve AI models.